Four Principles Behind TalentGPT’s JD Design

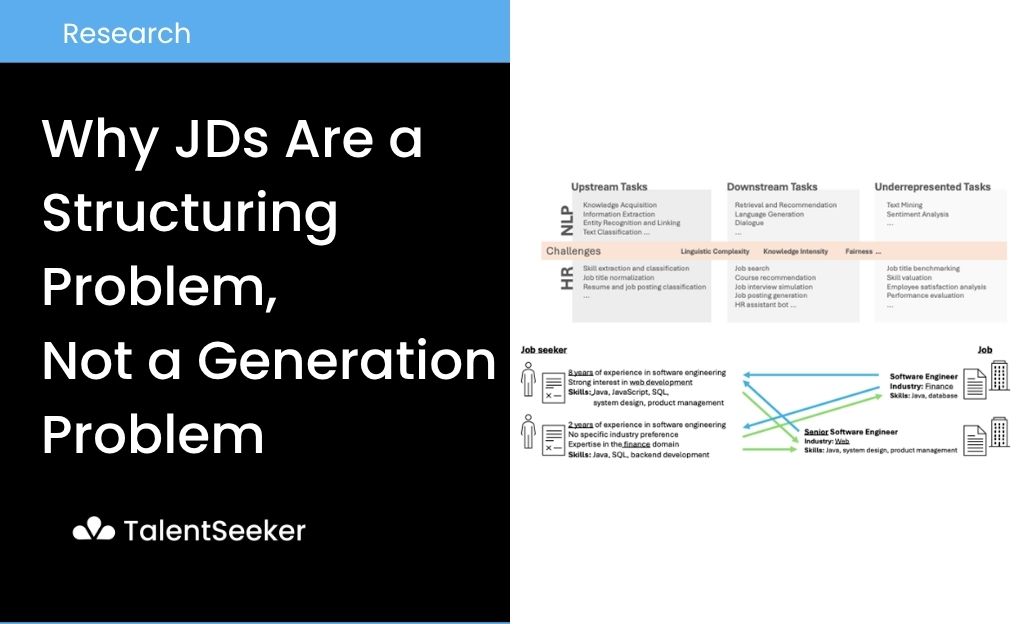

[fig 1] Landscape of NLP application within the HR domain – HR NLP Task Structure Diagram (Otani 2025)

Thanks to generative AI, creating a draft Job Description has become much easier. With just a few lines of input, it is now possible to quickly produce a well-written job posting. However, recent surveys that organize NLP applications in the HR domain show that real HR systems involve more than language generation alone. Tasks such as information extraction, text classification, recommendation, and generation are tightly intertwined. Each of these directly affects the quality of downstream decision-making inputs. Job Descriptions should be viewed in the same way. Writing a “plausible” job post and creating an “accurate” input that can be directly used for candidate search and matching are fundamentally different problems (Otani et al., 2025).

Research on organizational decision-making points to a similar conclusion. AI-assisted decisions require a more clearly defined decision search space than human judgment. This becomes especially important in hiring contexts, where alternatives must be compared and selected. It requires explicit specification of which attributes to optimize and which variables to evaluate candidates against. If the JD is ambiguous, then the search, recommendation, and matching processes built on top of it will inevitably inherit that ambiguity (Shrestha et al., 2019).

From this perspective, writing a JD is not simply a writing task. More precisely, it is not a sentence generation problem. Instead, it is a problem of constructing a structured input that is usable for hiring. When designing TalentGPT, the starting point was not “how to write better sentences.” It was “how to produce clearer hiring specifications.”

Why Generation Alone Is Not Enough

[fig 2] The problem of job recommendation – HR NLP Task Structure Diagram (Otani 2025)

General-purpose LLMs are strong at continuing fluent text even when the input is incomplete. However, research on LLM hallucination highlights that these models can generate content that appears plausible but is not factual or verified. In the context of Job Descriptions, this can manifest as unverified tech stacks, industry-standard-looking requirements, or experience criteria that do not align with the actual organization. The result may look polished at the sentence level, but it becomes unstable as an input specification (Huang et al., 2023).

Recent studies on job posting analysis point in the same direction. Krüger et al. argue that requirement extraction from job postings should not be treated as simple span extraction. Instead, it should be approached as a compositional entity modeling problem, where atomic entities are connected through typed relations. This structure includes roles, tools, experience levels, attitudes, and functional context. In other words, hiring requirements are not just a list of phrases in a paragraph. They are better understood as structured objects with internal relationships (Krüger et al., 2025).

At this point, the question shifts. It is no longer about how to write a more convincing JD. It becomes about how to produce a structure that can be directly used for candidate search.

Viewing Job Descriptions as Structured Data

The idea of treating job postings as structured data is not entirely new. The TREE Project in 1997 already proposed storing job ads in a partly language-independent schematic form. It enabled job seekers to search a database using multiple parameters. This can be seen as an early example of treating job postings both as readable documents and as searchable structures (Somers et al., 1997).

TalentGPT adopts the same premise. A JD is not just a description. It is a set of search constraints. Instead of treating it as a single paragraph, TalentGPT decomposes it into core dimensions that directly influence the candidate pool. In this article, the key dimensions are role, core responsibilities, domain, required skills, and experience level. From a system perspective, what matters is not how smooth the wording is. It is whether each dimension is defined or missing. This aligns with recent HR NLP research that frames the problem as a combination of extraction, classification, recommendation, and generation (Otani et al., 2025; Krüger et al., 2025).

Clarification-Driven JD Structuring

While the internal implementation of TalentGPT is not disclosed, its abstraction can be described as follows:

User Input → Confirmed Information Extraction → Core Dimension Mapping → Missing Dimension Detection → One High-Impact Clarification Question → Search-Ready JD

The key is not completion. It is clarification. Instead of expanding an initial request into a long JD immediately, TalentGPT first structures only the confirmed information. It then evaluates which dimensions are missing or ambiguous. After that, it selects a single question that can most significantly reshape the candidate search space.

The term “search-ready” is not a formal academic term. It refers to an internal design state where role, core responsibilities, domain, required skills, and experience level are sufficiently defined to be passed into downstream search processes.

This approach is also supported by information retrieval research. Studies on clarifying questions show that users often fail to fully express complex information needs in a single query. Systems can improve retrieval quality by asking targeted clarification questions. Later research treats the generation of clarifying questions itself as an independent problem. Human-AI interaction guidelines also recommend that systems should perform disambiguation when uncertain. Users should be able to easily dismiss or correct the system. The same principle applies to JD structuring. Instead of filling gaps with assumptions, it is safer to recover missing dimensions through targeted questions (Aliannejadi et al., 2019; Zamani et al., 2020; Amershi et al., 2019).

Four Design Principles of TalentGPT

1. Retain Only Verified Information

The first principle is simple. Do not include unverified information in the structure. A role labeled “Backend Developer” should not automatically imply specific languages, cloud environments, or frameworks. A marketing role should not automatically assume specific channel experience.

Hallucination research shows that the risk is not only completely incorrect outputs. It is also plausible but incorrect details that pass unnoticed. Since the JD serves as an upstream input for candidate search, such over-completion introduces significant risk (Huang et al., 2023).

This does not mean abandoning generation entirely. It is acceptable to normalize confirmed responsibilities into concise and consistent JD expressions. However, missing dimensions should never be filled with assumptions. In TalentGPT, generation is used for normalization and structuring, not expansion.

2. Treat JDs as Dimensions, Not Paragraphs

The second principle is to treat JDs as a set of dimensions rather than a block of text. Early job-schema approaches used searchable slots. More recent compositional extraction research shows that requirements are structured objects.

TalentGPT prioritizes dimensions such as role, responsibilities, domain, skills, and experience level. Instead of evaluating JD quality based on writing style or length, it evaluates whether these dimensions are properly defined. This significantly improves compatibility with downstream systems (Somers et al., 1997; Krüger et al., 2025).

From this perspective, JD completeness is redefined. It is not about how polished the sentences are. It is about whether the structure sufficiently constrains the candidate search space.

3. Prioritize Clarification Over Completion

The third principle is to ask precise questions rather than generate longer text when information is missing. Research shows that users rarely provide complete requirements in a single input. The same applies to JDs.

If a system fills missing dimensions with generated text, the output may appear natural but becomes unreliable for search. Instead, TalentGPT identifies the single most impactful missing dimension and asks a targeted question. The goal is not to increase the number of questions. It is to maximize information gain per question (Aliannejadi et al., 2019; Zamani et al., 2020).

This design is also critical for user interaction. Users respond more effectively when they understand what is missing and why the question matters. Clarification is not about prolonging the conversation. It is about reducing structuring cost.

4. Stop When It Is Search-Ready

The fourth principle is to define a sufficient state and stop at that point. While AI decision-making benefits from a well-defined search space, collecting more information indefinitely is not always optimal.

What matters is whether the search space is sufficiently defined for downstream tasks. AI systems perform better when the search space and objective function are clearly specified. At the same time, human-AI interaction guidelines emphasize that users should be able to easily edit, refine, or override outputs.

For this reason, TalentGPT uses “search-ready” as a stopping condition. Once role, responsibilities, domain, skills, and experience level are sufficiently defined, it moves to the next stage instead of continuing to ask questions (Shrestha et al., 2019; Amershi et al., 2019).

A good system is not one that asks many questions. It is one that knows when to stop.

Where TalentGPT Differs from General-Purpose GPT

The difference ultimately lies not in language fluency, but in what the system is optimizing for. General-purpose GPT models are highly effective at generating coherent text from incomplete input. This makes them useful for quickly drafting JDs.

However, when JDs are treated as inputs for candidate search, matching, and sourcing, the evaluation criteria change. HR NLP research shows that this domain involves not only generation but also extraction, classification, and recommendation. Job posting analysis research emphasizes structured representations of requirements. Hallucination research highlights the risks of over-completion (Otani et al., 2025; Krüger et al., 2025; Huang et al., 2023).

This is why the comparison “ChatGPT writes JDs, TalentGPT creates hiring-ready JDs” is not about superiority. It reflects a difference in problem definition. General GPT systems are optimized for continuation. TalentGPT is designed around faithful specification, selective clarification, and structured output.

When JDs are viewed as search specifications rather than documents, TalentGPT becomes the more appropriate system. This is not about benchmark performance. It is about the objective function used to design the system.

Conclusion

The core capability of JD-writing AI is not producing more sophisticated sentences. It is avoiding unverified information, structuring dimensions that shape the candidate search space, asking only when necessary, and stopping at the right moment.

When JDs are reframed as a structuring problem rather than a writing problem, it becomes clear why systems like TalentGPT are more suitable than general-purpose generative models. Writing a JD well and making a JD usable for hiring are not the same problem (Shrestha et al., 2019; Krüger et al., 2025).

Reference

Otani, N., Bhutani, N., & Hruschka, E. (2025). Natural Language Processing for Human Resources: A Survey. NAACL 2025 Industry Track.

Krüger, K., Binnewitt, J., Ehmann, K., Winnige, S., & Akbik, A. (2025). Improving Online Job Advertisement Analysis via Compositional Entity Extraction. EMNLP 2025.

Huang, L. et al. (2023). A Survey on Hallucination in Large Language Models: Principles, Taxonomy, Challenges, and Open Questions. arXiv.

Aliannejadi, M., Zamani, H., Crestani, F., & Croft, W. B. (2019). Asking Clarifying Questions in Open-Domain Information-Seeking Conversations. SIGIR 2019.

Zamani, H., Dumais, S. T., Craswell, N., Bennett, P. N., & Lueck, G. (2020). Generating Clarifying Questions for Information Retrieval. WWW 2020.

Amershi, S. et al. (2019). Guidelines for Human-AI Interaction. CHI 2019.

Shrestha, Y. R., Ben-Menahem, S. M., & von Krogh, G. (2019). Organizational Decision-Making Structures in the Age of Artificial Intelligence. California Management Review.

Somers, H. et al. (1997). Multilingual Generation and Summarization of Job Adverts: the TREE Project. ANLP 1997.